Transferring styles for reduced texture bias and improved robustness in semantic segmentation networks

Ben Hamscher1 • Edgar Heinert2,3 • Annika Mütze2,3 • Kira Maag1 • Matthias Rottmann2,3

1 Department of Computer Science Heinrich-Heine-University Dusseldorf Dusseldorf, Germany

2 Institute of Computer Science, Osnabrück University, Germany

3 Department of Mathematics University of Wuppertal Wuppertal, Germany

European Conference on Artificial Intelligence (ECAI) 2025 · 23. Okt. 2025

Abstract

Making Semantic Segmentation Less Texture-Biased and Way More Robust

Deep neural networks often lean heavily on textures instead of shapes. That shortcut hurts robustness and is not how we humans perceive the world. We ask a simple question:

Can style transfer break this habit in semantic segmentation without destroying structure?

Our answer: yes.

What we do

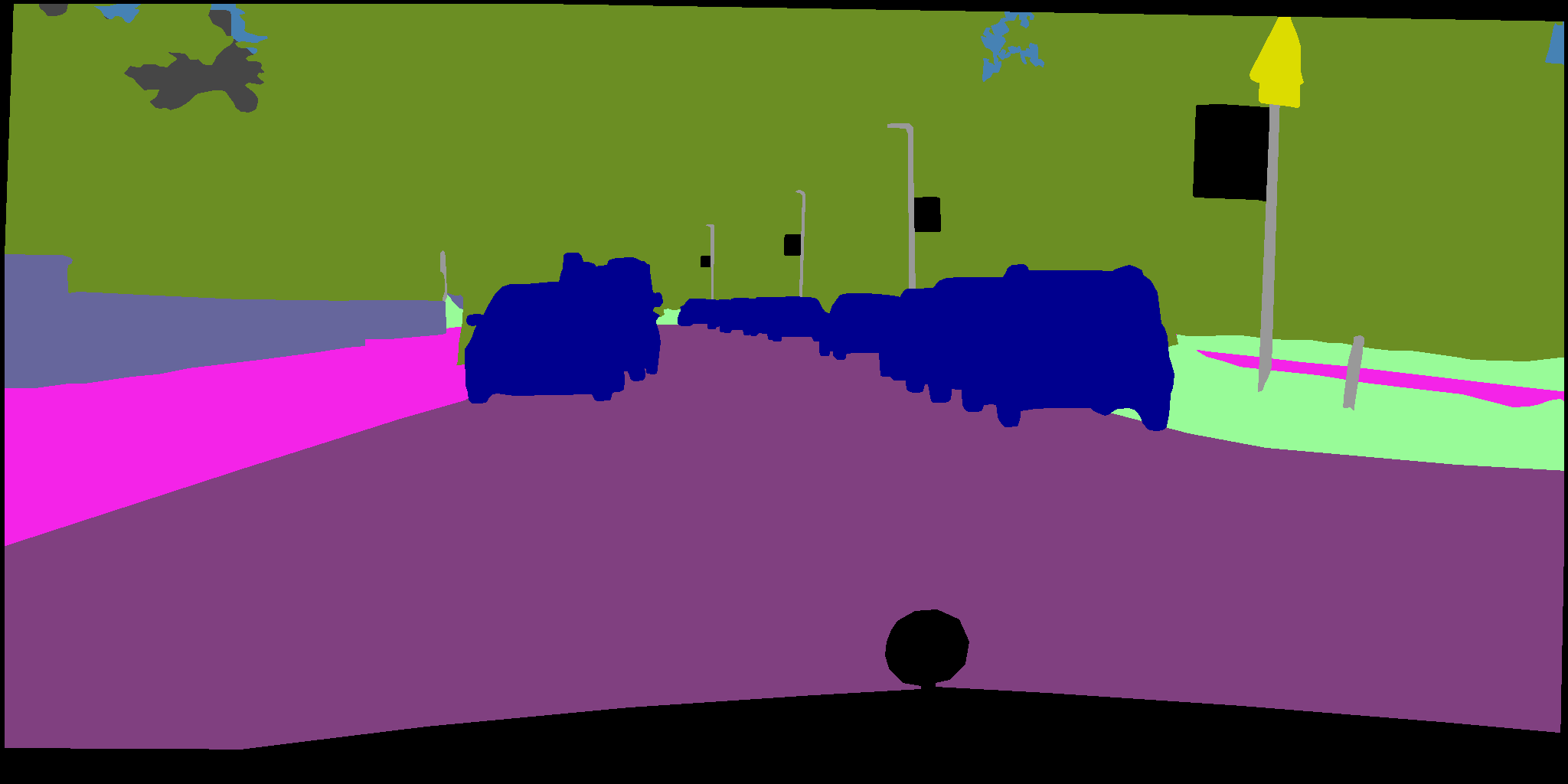

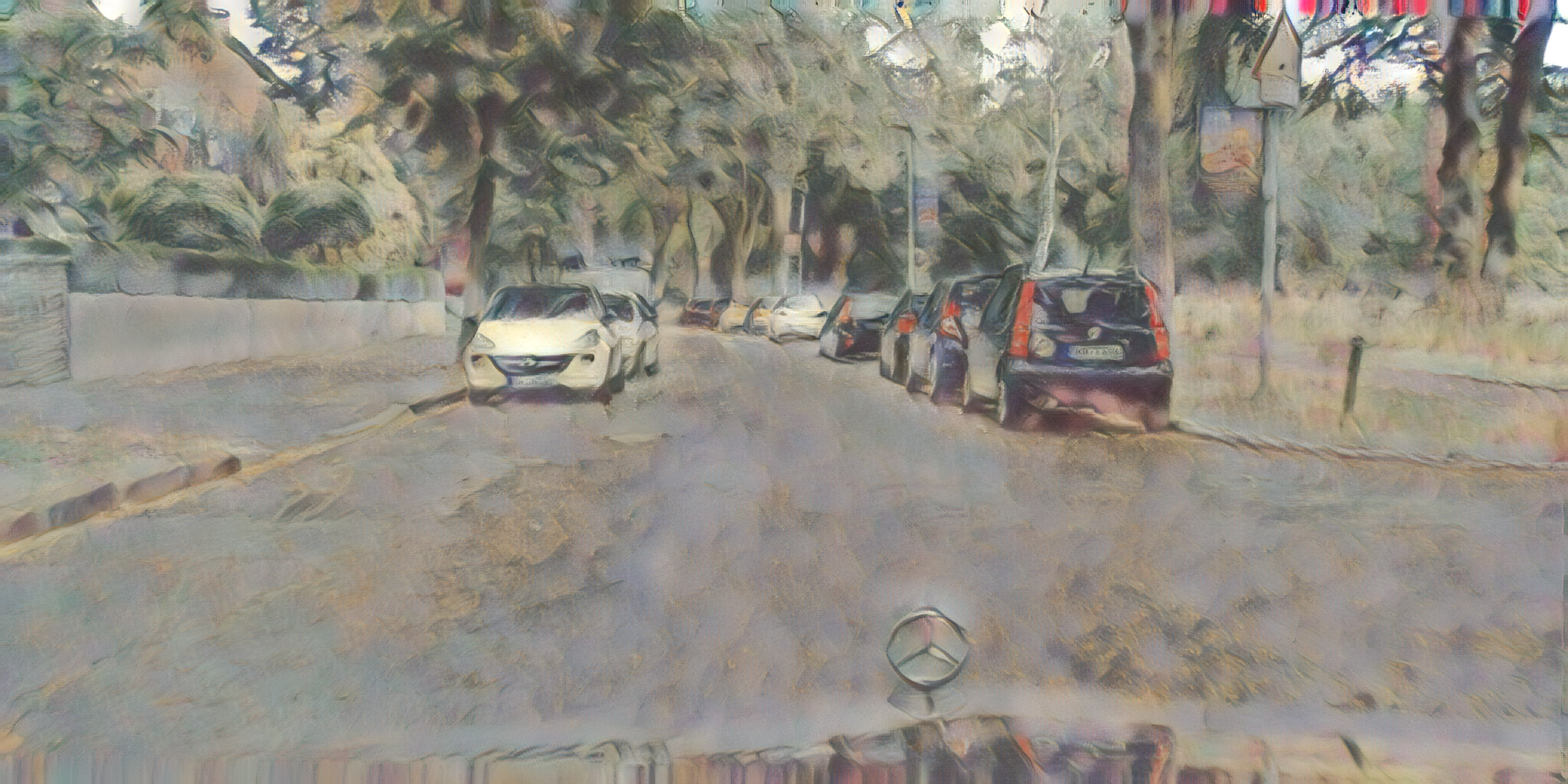

• Apply style transfer to whole images and at a local level, using randomly generated regions

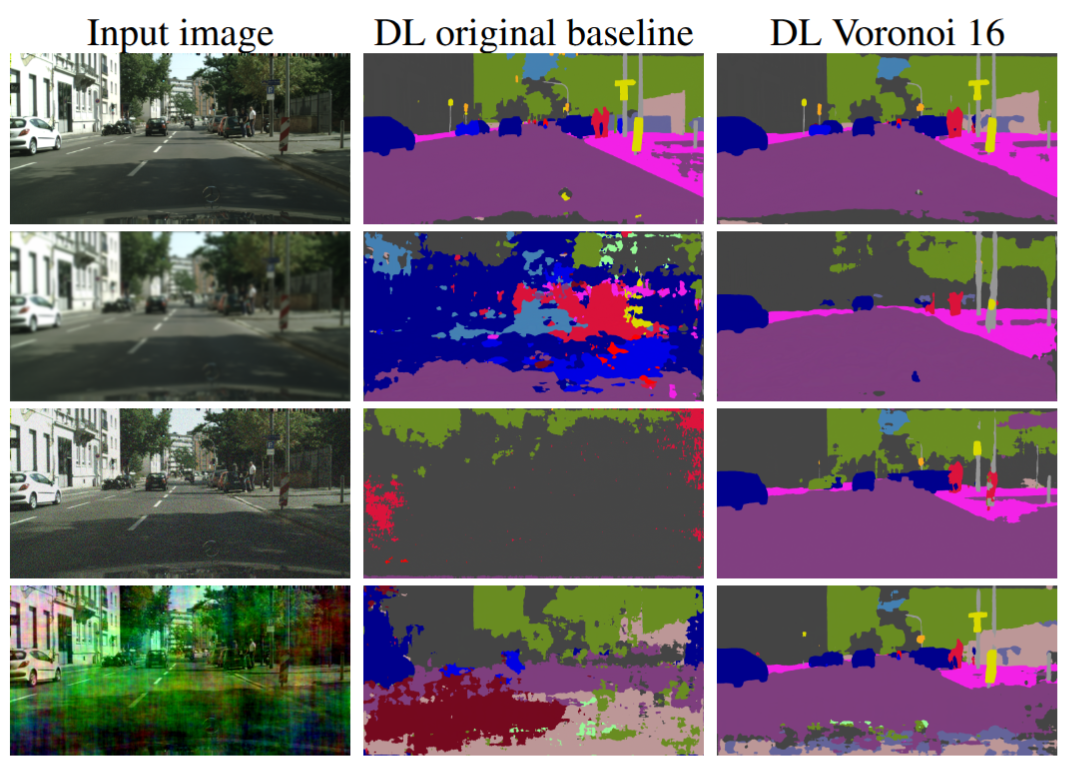

• Preserve global structure while scrambling texture cues

• Train segmentation models to rely more on shape and geometry, not surface appearance

What we find

• Significantly reduced texture bias

• Strong robustness gains against Common image corruptions and adversarial attacks

• Works across CNN and Transformer models and Cityscapes and PASCAL Context data

By forcing models to look beyond texture, our method aligns model behaviour with human perception and improves generalization.

Key Takeaways

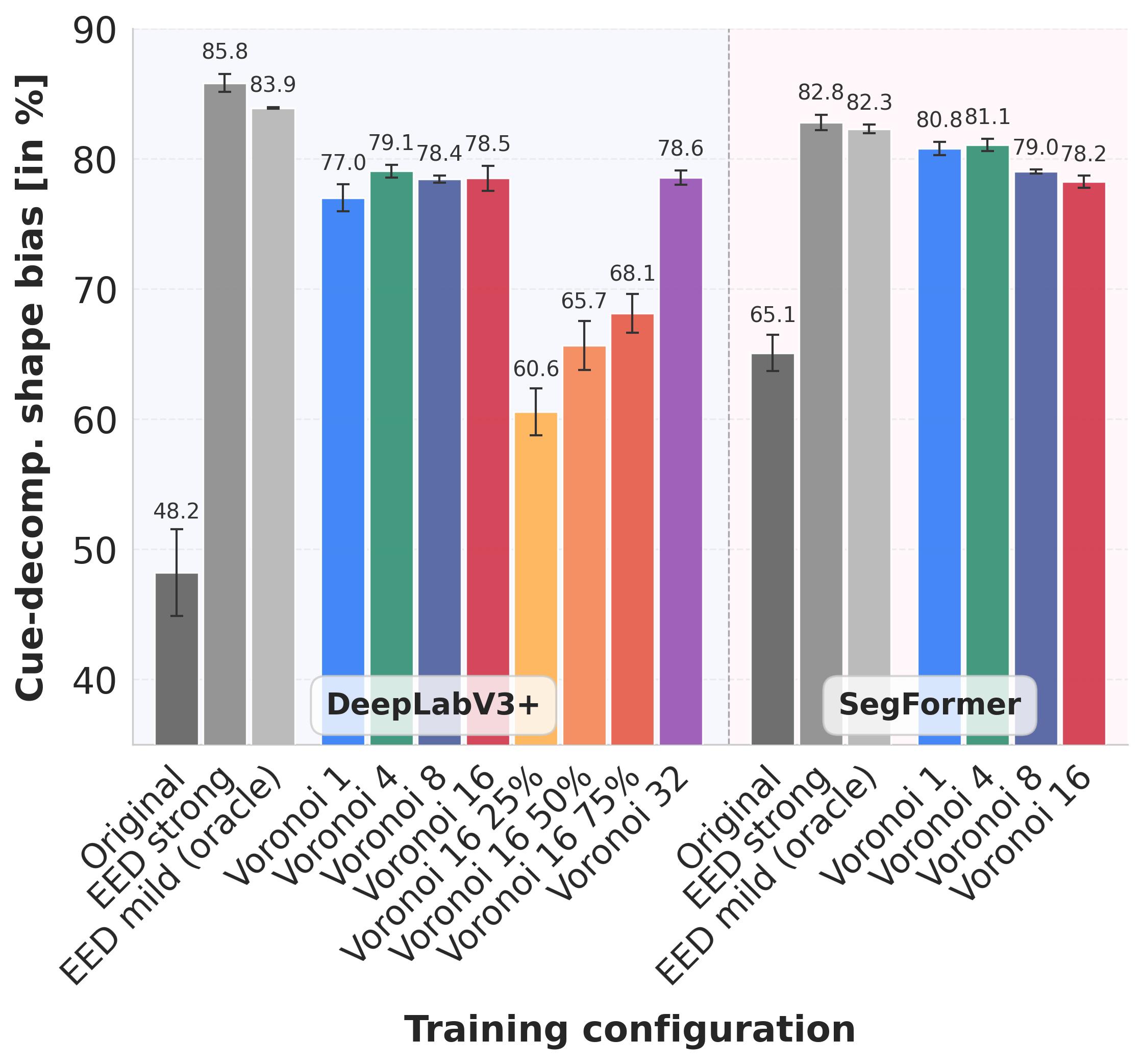

1. Style transfer consistently increases shape bias

• All models trained with stylized data rely more on shape and less on texture than models trained on original images.

• This holds across:

◦ Different datasets (Cityscapes, PASCAL Context)

◦ Different architectures (CNNs and Transformers)

• Transformer models start with a higher shape bias, but CNNs benefit the most from stylization, showing gains of 30+ percentage points in shape bias.

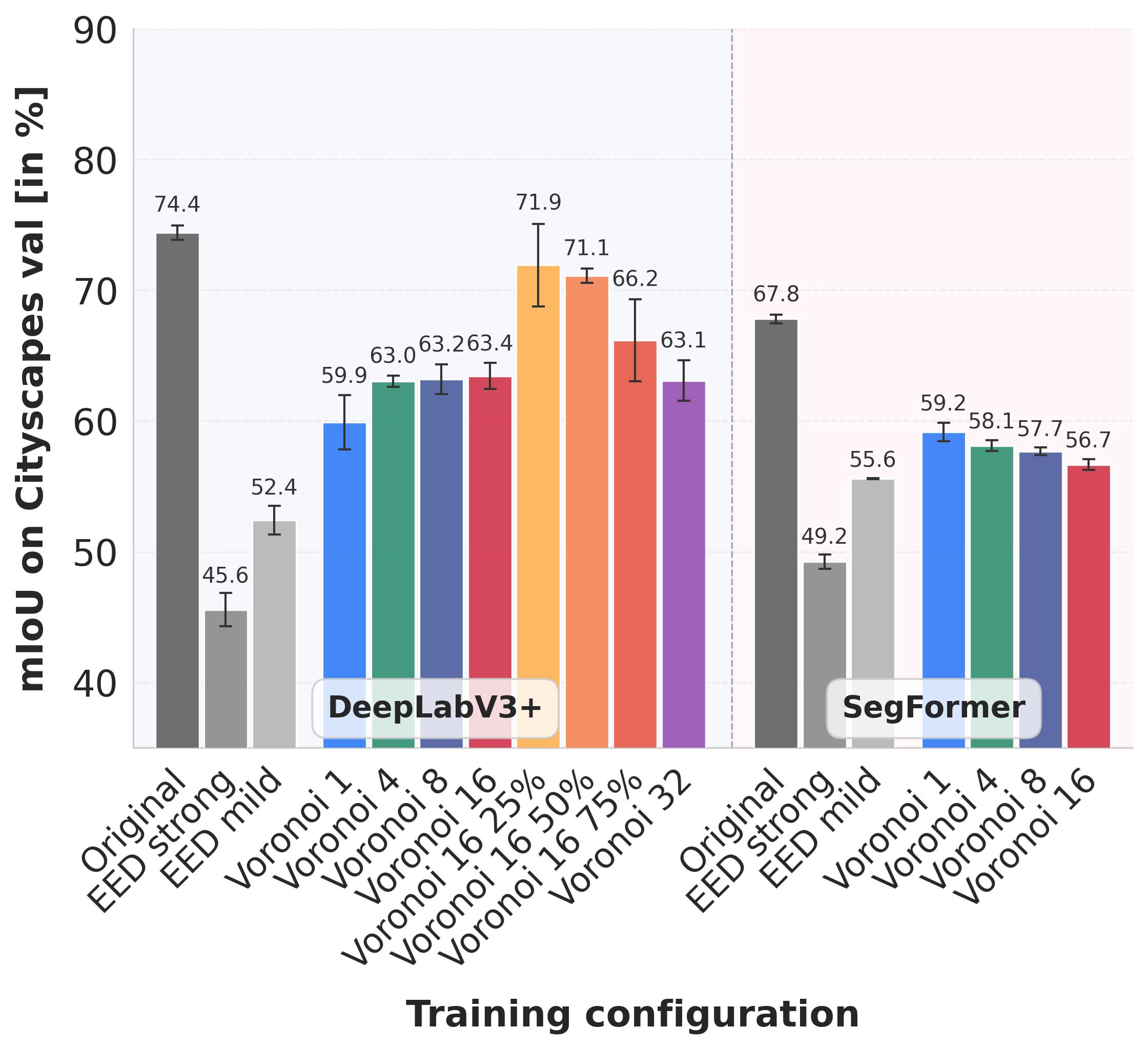

2. Shape bias (https://arxiv.org/abs/2503.12453) can be precisely controlled

• By adjusting:

◦ the number of Voronoi regions per image

◦ the fraction of stylized regions

• We obtain a smooth trade-off between:

◦ preserving performance on original images (mIoU)

◦ increasing shape bias

• Partial stylization (e.g. 25%) delivers substantial shape-bias gains while barely sacrificing accuracy.

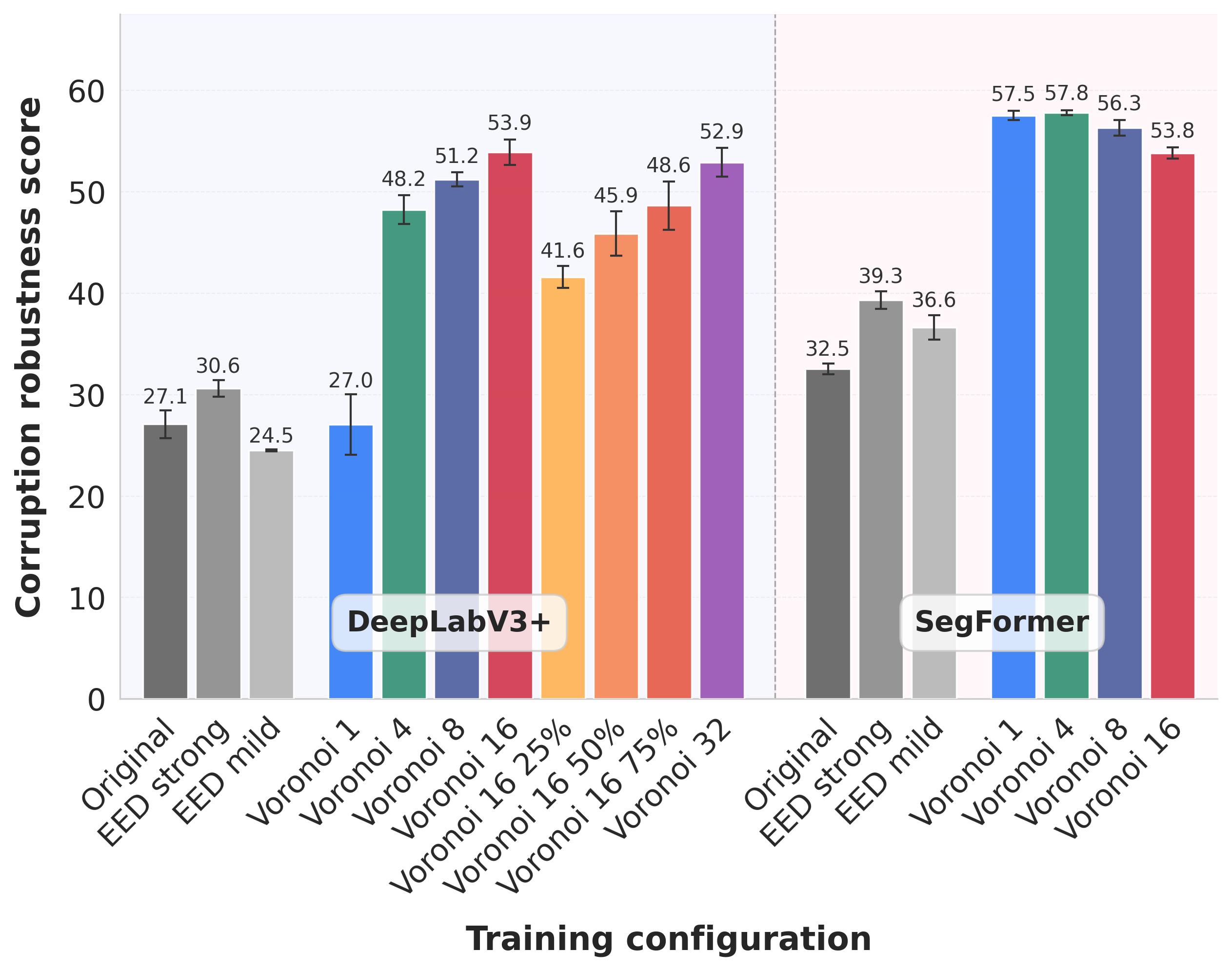

3. Strong gains in robustness to image corruptions

• Style transfer dramatically improves robustness to noise, blur, and other corruptions.

• Relative robustness nearly doubles for DeepLabV3+ CNN and increases by ~80% for Transformer SegFormerB.

• Partial stylization again offers a sweet spot:

◦ robustness close to fully stylized models

◦ accuracy close to the original baseline

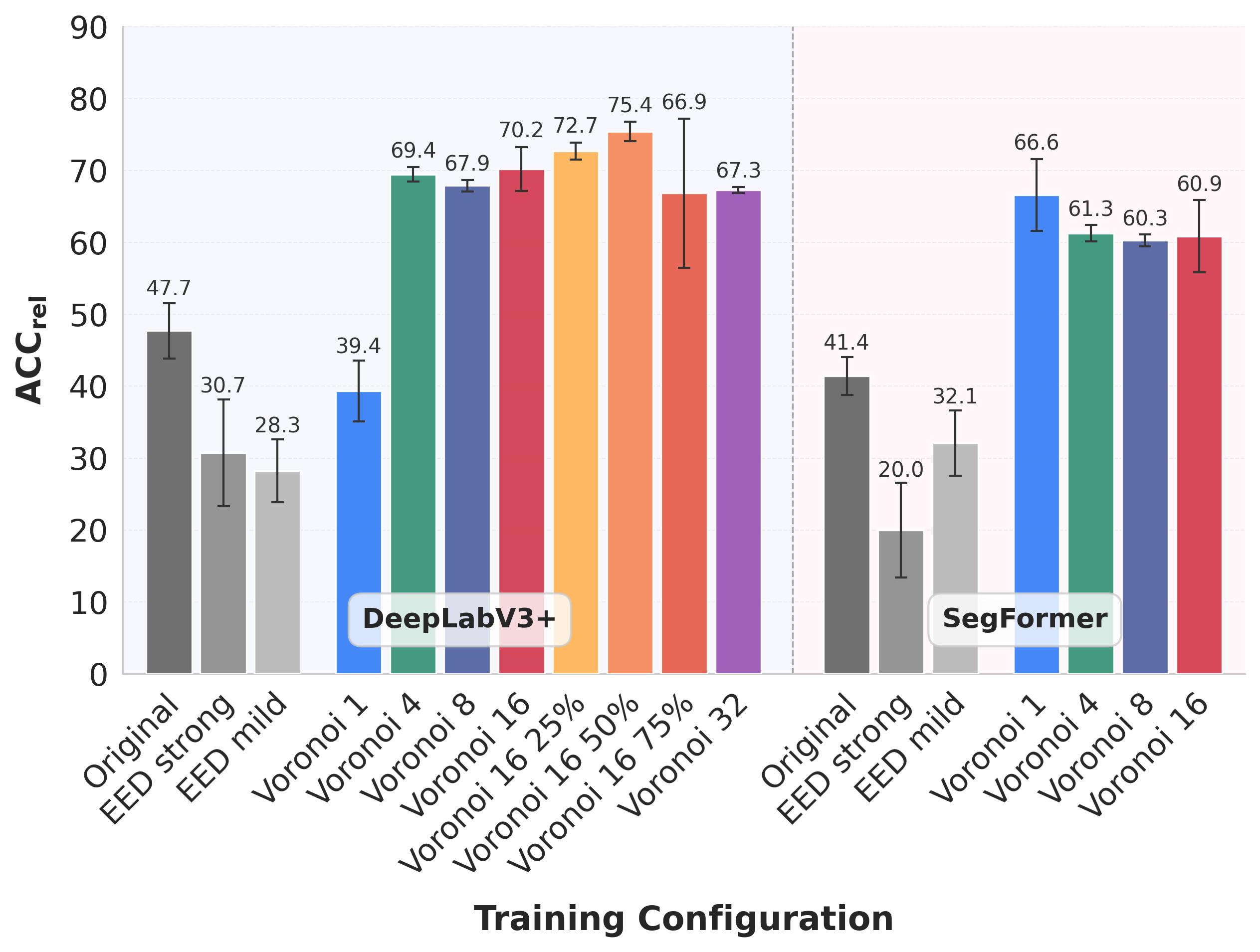

4. Improved robustness to adversarial attacks

• Models trained with stylized images are significantly harder to fool with adversarial perturbations.

• Stylized models outperform:

◦ standard training

◦ baselines trained on EED(https://github.com/eheinert/reducing-texture-bias-of-dnns-via-eed/) data

• Interestingly, partially stylized models are often more robust than fully stylized ones.

• Gains are consistent across:

◦ untargeted and targeted attacks

◦ different perturbation strengths

Resources & Links

Acknowledgments

A. M., E. H. and M. R. acknowledge support through the junior re- search group project “UnrEAL” by the German Federal Ministry of Research, Technology and Space (BMFTR), grant no. 01IS22069. A. M., and M. R. further acknowledge support through the project “REFRAME” by BMFTR, grant no. 01IS24073C.

Citation

@inproceedings{hamscher2025transferring,

title = {Transferring Styles for Reduced Texture Bias and Improved Robustness in Semantic Segmentation Networks},

author = {Hamscher, Ben and Heinert, Edgar and M{\"u}tze, Annika and Maag, Kira and Rottmann, Matthias},

booktitle = {Proceedings of the European Conference on Artificial Intelligence (ECAI)},

year = {2025}

}

Contact

Have questions or want to collaborate? Reach out:

- Email: ben.hamscher[at]hhu.de